Finite-temperature quantum topological order in three dimensions https://arxiv.org/abs/2503.02928

arXiv.org

Finite-temperature quantum topological order in three dimensions

We identify a three-dimensional system that exhibits long-range entanglement at sufficiently small but nonzero temperature--it therefore constitutes a quantum topological order at finite...

❤1

Find First, Track Next: Decoupling Identification and Propagation in Referring Video Object Segmentation https://arxiv.org/abs/2503.03492

arXiv.org

Find First, Track Next: Decoupling Identification and Propagation...

Referring video object segmentation aims to segment and track a target object in a video using a natural language prompt. Existing methods typically fuse visual and textual features in a highly...

👍1

Forwarded from Hacker News

Show HN: Factorio Learning Environment – Agents Build Factories (🔥 Score: 159+ in 2 hours)

Link: https://readhacker.news/s/6qKug

Comments: https://readhacker.news/c/6qKug

I'm Jack, and I'm excited to share a project that has channeled my Factorio addiction recently: the Factorio Learning Environment (FLE).

FLE is an open-source framework for developing and evaluating LLM agents in Factorio. It provides a controlled environment where AI models can attempt complex automation, resource management, and optimisation tasks in a grounded world with meaningful constraints.

A critical advantage of Factorio as a benchmark is its unbounded nature. Unlike many evals that are quickly saturated by newer models, Factorio's geometric complexity scaling means it won't be "solved" in the next 6 months (or possibly even years). This allows us to meaningfully compare models by the order-of-magnitude of resources they can produce - creating a benchmark with longevity.

The project began 18 months ago after years of playing Factorio, recognising its potential as an AI research testbed. A few months ago, our team (myself, Akbir, and Mart) came together to create a benchmark that tests agent capabilities in spatial reasoning and long-term planning.

Two technical innovations drove this project forward: First, we discovered that piping Lua into the Factorio console over TCP enables running (almost) arbitrary code without directly modding the game. Second, we developed a first-class Python API that wraps these Lua programs to provide a clean, type-hinted interface for AI agents to interact with Factorio through familiar programming paradigms.

Agents interact with FLE through a REPL pattern:

1. They observe the world (seeing the output of their last action)

2. Generate Python code to perform their next action

3. Receive detailed feedback (including exceptions and stdout)

We provide two main evaluation settings:

- Lab-play: 24 structured tasks with fixed resources

- Open-play: An unbounded task of building the largest possible factory on a procedurally generated map

We found that while LLMs show promising short-horizon skills, they struggle with spatial reasoning in constrained environments. They can discover basic automation strategies (like electric-powered drilling) but fail to achieve more complex automation (like electronic circuit manufacturing). Claude Sonnet 3.5 is currently the best model (by a significant margin).

The code is available at https://github.com/JackHopkins/factorio-learning-environment.

You'll need:

- Factorio (version 1.1.110)

- Docker

- Python 3.10+

The README contains detailed installation instructions and examples of how to run evaluations with different LLM agents.

We would love to hear your thoughts and see what others can do with this framework!

Link: https://readhacker.news/s/6qKug

Comments: https://readhacker.news/c/6qKug

I'm Jack, and I'm excited to share a project that has channeled my Factorio addiction recently: the Factorio Learning Environment (FLE).

FLE is an open-source framework for developing and evaluating LLM agents in Factorio. It provides a controlled environment where AI models can attempt complex automation, resource management, and optimisation tasks in a grounded world with meaningful constraints.

A critical advantage of Factorio as a benchmark is its unbounded nature. Unlike many evals that are quickly saturated by newer models, Factorio's geometric complexity scaling means it won't be "solved" in the next 6 months (or possibly even years). This allows us to meaningfully compare models by the order-of-magnitude of resources they can produce - creating a benchmark with longevity.

The project began 18 months ago after years of playing Factorio, recognising its potential as an AI research testbed. A few months ago, our team (myself, Akbir, and Mart) came together to create a benchmark that tests agent capabilities in spatial reasoning and long-term planning.

Two technical innovations drove this project forward: First, we discovered that piping Lua into the Factorio console over TCP enables running (almost) arbitrary code without directly modding the game. Second, we developed a first-class Python API that wraps these Lua programs to provide a clean, type-hinted interface for AI agents to interact with Factorio through familiar programming paradigms.

Agents interact with FLE through a REPL pattern:

1. They observe the world (seeing the output of their last action)

2. Generate Python code to perform their next action

3. Receive detailed feedback (including exceptions and stdout)

We provide two main evaluation settings:

- Lab-play: 24 structured tasks with fixed resources

- Open-play: An unbounded task of building the largest possible factory on a procedurally generated map

We found that while LLMs show promising short-horizon skills, they struggle with spatial reasoning in constrained environments. They can discover basic automation strategies (like electric-powered drilling) but fail to achieve more complex automation (like electronic circuit manufacturing). Claude Sonnet 3.5 is currently the best model (by a significant margin).

The code is available at https://github.com/JackHopkins/factorio-learning-environment.

You'll need:

- Factorio (version 1.1.110)

- Docker

- Python 3.10+

The README contains detailed installation instructions and examples of how to run evaluations with different LLM agents.

We would love to hear your thoughts and see what others can do with this framework!

🔥6👍2👀1

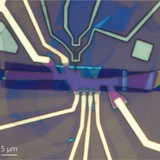

Comment on "Interferometric single-shot parity measurement in InAs-Al hybrid devices", Microsoft Quantum, Nature 638, 651-655 (2025) https://arxiv.org/abs/2503.08944

arXiv.org

Comment on "Interferometric single-shot parity measurement in...

We consider the 'parity readout' of a (topological) superconductor claimed in Nature 638, 651-655 (2025). A prerequisite for this claim is the existence of a superconducting gap in the nanowire...

👍1

Establishing a New Benchmark in Quantum Computational Advantage with 105-qubit Zuchongzhi 3.0 Processor https://journals.aps.org/prl/abstract/10.1103/PhysRevLett.134.090601

Physical Review Letters

Establishing a New Benchmark in Quantum Computational Advantage with 105-qubit Zuchongzhi 3.0 Processor

A new high-performance quantum processor boasts 105 superconducting qubits and rivals Google's acclaimed Willow processor.

Just links pinned «At the March Meeting next week. Ping me if you wanna meet in the LA area»

Observation of High-Temperature Dissipationless Fractional Chern Insulator https://arxiv.org/abs/2503.10989

arXiv.org

Observation of High-Temperature Dissipationless Fractional Chern Insulator

The fractional quantum anomalous Hall effect has recently been experimentally observed in zero-field fractional Chern insulators (FCI). However, an outstanding challenge is the presence of a...

👍2

Bras and Kets in Euclidean Path Integrals https://arxiv.org/abs/2503.12771

arXiv.org

Bras and Kets in Euclidean Path Integrals

Quantum mechanics requires a hermitian inner product <~,~> -- linear in one variable, antilinear in the other -- while the inner product (~,~) that comes most naturally from Euclidean path...

👍4

PAC-learning of free-fermionic states is NP-hard https://quantum-journal.org/papers/q-2025-03-20-1665/

Quantum

PAC-learning of free-fermionic states is NP-hard

Lennart Bittel, Antonio A. Mele, Jens Eisert, and Lorenzo Leone,

Quantum 9, 1665 (2025).

Free-fermionic states, also known as matchgates or Gaussian states, are a fundamental class of quantum states due to their efficient classical simulability and their…

Quantum 9, 1665 (2025).

Free-fermionic states, also known as matchgates or Gaussian states, are a fundamental class of quantum states due to their efficient classical simulability and their…

Compute Optimal Scaling of Skills: Knowledge vs Reasoning https://arxiv.org/abs/2503.10061

arXiv.org

Compute Optimal Scaling of Skills: Knowledge vs Reasoning

Scaling laws are a critical component of the LLM development pipeline, most famously as a way to forecast training decisions such as 'compute-optimally' trading-off parameter count and dataset...

BigO(Bench) -- Can LLMs Generate Code with Controlled Time and Space Complexity? https://arxiv.org/abs/2503.15242

arXiv.org

BigO(Bench) -- Can LLMs Generate Code with Controlled Time and...

We introduce BigO(Bench), a novel coding benchmark designed to evaluate the capabilities of generative language models in understanding and generating code with specified time and space...

Forwarded from AbstractDL

M-Attack: как обмануть GPT-4.5 и Gemini

Все привыкли, что атаковать современные мультимодальные модели (типа GPT-4o, Claude, Gemini и т.п.) крайне сложно — особенно, если это black-box модели, где нет доступа к градиентам и архитектуре. Стандартные подходы атак типа "выдать одну картинку за другую" часто генерируют какие-то невнятные шумы, которые либо игнорируются моделью, либо приводят к абстрактным ответам типа "размытое изображение".

Но оказалось, что проблема была не в самих моделях, а в подходе к генерации возмущений. В свежей статье предложили очень простой, но мощный подход — M-Attack:

1. Берём исходную и целевую картинки.

2. На каждом шаге рандомно crop'аем кусок исходного изображения (50-100% площади) и затем ресайзим обратно до исходного размера.

3. Заставляем эмбеддинги этого кусочка максимально приблизиться к эмбеддингам целевого изображения оптимизируясь в white-box режиме по ансамблю открытых визуальных моделей (например, CLIP, ViT и тп).

И всё! После нескольких итераций в центральной области картинки "проявляется" целевая семантика, при этом возмущения выглядят крайне незаметно и аккуратно (в отличие от других подходов).

Авторы добились совершенно впечатляющих результатов: успех атаки (ASR) превышает 90% (!) для GPT-4.5, GPT-4o и даже для o1 и Gemini. Код и датасет из 100 атакованных картинок выложили в открытый доступ.

Статья, GitHub, dataset

Все привыкли, что атаковать современные мультимодальные модели (типа GPT-4o, Claude, Gemini и т.п.) крайне сложно — особенно, если это black-box модели, где нет доступа к градиентам и архитектуре. Стандартные подходы атак типа "выдать одну картинку за другую" часто генерируют какие-то невнятные шумы, которые либо игнорируются моделью, либо приводят к абстрактным ответам типа "размытое изображение".

Но оказалось, что проблема была не в самих моделях, а в подходе к генерации возмущений. В свежей статье предложили очень простой, но мощный подход — M-Attack:

1. Берём исходную и целевую картинки.

2. На каждом шаге рандомно crop'аем кусок исходного изображения (50-100% площади) и затем ресайзим обратно до исходного размера.

3. Заставляем эмбеддинги этого кусочка максимально приблизиться к эмбеддингам целевого изображения оптимизируясь в white-box режиме по ансамблю открытых визуальных моделей (например, CLIP, ViT и тп).

И всё! После нескольких итераций в центральной области картинки "проявляется" целевая семантика, при этом возмущения выглядят крайне незаметно и аккуратно (в отличие от других подходов).

Авторы добились совершенно впечатляющих результатов: успех атаки (ASR) превышает 90% (!) для GPT-4.5, GPT-4o и даже для o1 и Gemini. Код и датасет из 100 атакованных картинок выложили в открытый доступ.

Статья, GitHub, dataset

🔥10👍6❤2

Entropy of strongly correlated electrons in a partially filled Landau level https://arxiv.org/abs/2503.16738

arXiv.org

Entropy of strongly correlated electrons in a partially filled Landau level

We use high-resolution chemical potential measurements to extract the entropy of monolayer and bilayer graphene in the quantum Hall regime via the Maxwell relation $\left.\frac{dμ}{dT}\right|_N...

On the Importance of Error Mitigation for Quantum Computation https://arxiv.org/abs/2503.17243

arXiv.org

On the Importance of Error Mitigation for Quantum Computation

Quantum error mitigation (EM) is a family of hybrid quantum-classical methods for eliminating or reducing the effect of noise and decoherence on quantum algorithms run on quantum hardware, without...

🔥3